In this tutorial we will learn how to make a jersey based rest web services project in a java. And if you are new to rest web services this tutorial will also help you create your first basic sample rest api project.

Libraries used

Jersey 2.28

Note : Jersey 2.28 library has been released and is available in maven central! Jersey 2.28, the first Jakarta EE implementation of JAX-RS 2.1 has finally been released.

https://jersey.github.io/

Tools used

Maven

JDK 1.8 (even 1.6 would work fine)

Eclipse/IntelliJ(you can use whichever you are comfortable with)

Tomcat Server 8

These steps are followed in this tutorial.

it’s Damn Easy !

Step 1: create a java web project in eclipse and convert it to maven project.

Step 2: add jersey dependency in pom.xml and update the project.

Step 3: create an API class.

Step 4: in the web.xml add the servlet and servlet mapping.

Step 5: Just run the project, Hurray !

POM Dependencies

<properties>

<jersey2.version>2.28</jersey2.version>

<jaxrs.version>2.1.1</jaxrs.version>

</properties>

<dependencies>

<!-- JAX-RS -->

<dependency>

<groupId>javax.ws.rs</groupId>

<artifactId>javax.ws.rs-api</artifactId>

<version>${jaxrs.version}</version>

</dependency>

<!-- Jersey 2.28 -->

<dependency>

<groupId>org.glassfish.jersey.containers</groupId>

<artifactId>jersey-container-servlet</artifactId>

<version>${jersey2.version}</version>

</dependency>

<dependency>

<groupId>org.glassfish.jersey.core</groupId>

<artifactId>jersey-server</artifactId>

<version>${jersey2.version}</version>

</dependency>

<!-- add the below dependency for the below reason, otherwise it throws Injection Exception -->

<dependency>

<groupId>org.glassfish.jersey.inject</groupId>

<artifactId>jersey-hk2</artifactId>

<version>2.28</version>

</dependency>

</dependencies>

Here is the reason. Starting from Jersey 2.26, Jersey removed HK2 as a hard dependency. It created an SPI as a facade for the dependency injection provider, in the form of the InjectionManager and InjectionManagerFactory. So for Jersey to run, we need to have an implementation of the InjectionManagerFactory. There are two implementations of this, which are for HK2 and CDI.

1. API code

package com.programmertoday.restjersey.api;

import javax.ws.rs.GET;

import javax.ws.rs.Path;

@Path("/api")

public class JerseyResource {

@GET

@Path("/message")

public String getMyMessage()

{

return "Hello World - its Jersey 2 REST API";

}

}

2. Web.xml

<?xml version="1.0" encoding="UTF-8"?>

<web-app xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns="http://java.sun.com/xml/ns/javaee"

xsi:schemaLocation="http://java.sun.com/xml/ns/javaee

http://java.sun.com/xml/ns/javaee/web-app_3_0.xsd"

id="WebApp_ID" version="3.0">

<display-name>RESTful_Project</display-name>

<welcome-file-list>

<welcome-file>index.html</welcome-file>

<welcome-file>index.htm</welcome-file>

<welcome-file>index.jsp</welcome-file>

<welcome-file>default.html</welcome-file>

<welcome-file>default.htm</welcome-file>

<welcome-file>default.jsp</welcome-file>

</welcome-file-list>

<servlet>

<servlet-name>jersey-serlvet</servlet-name>

<servlet-class>org.glassfish.jersey.servlet.ServletContainer</servlet-class>

<init-param>

<param-name>jersey.config.server.provider.packages</param-name>

<param-value>com.programmertoday.restjersey.api</param-value>

</init-param>

<load-on-startup>1</load-on-startup>

</servlet>

<servlet-mapping>

<servlet-name>jersey-serlvet</servlet-name>

<url-pattern>/rest/*</url-pattern>

</servlet-mapping>

</web-app>

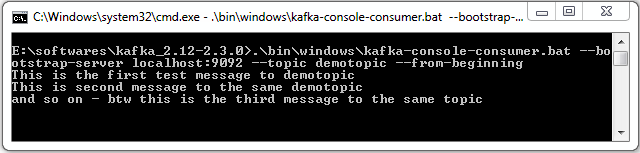

TEST the code

Add a tomcat server and run the project !!

Using any REST client OR any web browser like google chrome open the below url to hit the api.

http://localhost:8080/RESTful_Project/rest/api/message

Summary

In this article we learnt how to create a simple Jersey based REST API Project and how to run it, its Super simple and easy !.

Hope you liked the article !